It is very simple to scale-out a TKGm cluster. The command

tanzu cluster scale cluster_name --controlplane-machine-count 5 --worker-machine-count 10

will easily do this for you, this is known as horizontal scale-out. But have you thought of how to scale-up control plane or worker nodes with more CPU or memory?

This post discusses how you can scale up a TKGm worker node, tl;dr how to increase or decrease worker node CPU, RAM, disk.

Getting started

It is not a simple process to scale-up as it is to scale-out. Follow the steps below to scale-up your TKGm cluster.

Step 1.

Run the following command to obtain the list of vSphere machine templates that TKGm uses to deploy control plane and worker nodes.

kubectl get vspheremachinetemplate

NAME AGE

tkg-ssc-control-plane 3d1h

tkg-ssc-worker 3d1h

tkg-workload-01-control-plane 3d

tkg-workload-01-worker 3d

You can see that there are four machine templates.

Lets say we want to increase the size of the worker nodes in the tkg-workload-01 cluster.

Lets describe the tkg-workload-01-worker machine template.

kubectl describe vspheremachinetemplate tkg-workload-01-worker

Name: tkg-workload-01-worker

Namespace: default

Labels: <none>

Annotations: <none>

API Version: infrastructure.cluster.x-k8s.io/v1alpha3

Kind: VSphereMachineTemplate

Metadata:

Creation Timestamp: 2021-10-29T14:11:25Z

Generation: 1

Managed Fields:

API Version: infrastructure.cluster.x-k8s.io/v1alpha3

Fields Type: FieldsV1

fieldsV1:

f:metadata:

f:annotations:

.:

f:kubectl.kubernetes.io/last-applied-configuration:

f:spec:

.:

f:template:

.:

f:spec:

.:

f:cloneMode:

f:datacenter:

f:datastore:

f:diskGiB:

f:folder:

f:memoryMiB:

f:network:

.:

f:devices:

f:numCPUs:

f:resourcePool:

f:server:

f:storagePolicyName:

f:template:

Manager: kubectl-client-side-apply

Operation: Update

Time: 2021-10-29T14:11:25Z

API Version: infrastructure.cluster.x-k8s.io/v1alpha3

Fields Type: FieldsV1

fieldsV1:

f:metadata:

f:ownerReferences:

.:

k:{"uid":"be507594-0c05-4d30-8ed6-56811733df23"}:

.:

f:apiVersion:

f:kind:

f:name:

f:uid:

Manager: manager

Operation: Update

Time: 2021-10-29T14:11:25Z

Owner References:

API Version: cluster.x-k8s.io/v1alpha3

Kind: Cluster

Name: tkg-workload-01

UID: be507594-0c05-4d30-8ed6-56811733df23

Resource Version: 45814

UID: fc1f3d9f-078f-4282-b93f-e46593a760a5

Spec:

Template:

Spec:

Clone Mode: fullClone

Datacenter: /TanzuPOC

Datastore: tanzu_ssd_02

Disk Gi B: 40

Folder: /TanzuPOC/vm/tkg-vsphere-workload

Memory Mi B: 16384

Network:

Devices:

dhcp4: true

Network Name: /TanzuPOC/network/TKG-wkld

Num CP Us: 4

Resource Pool: /TanzuPOC/host/Workload Cluster 1/Resources/tkg-vsphere-workload

Server: vcenter.vmwire.com

Storage Policy Name:

Template: /TanzuPOC/vm/ubuntu-2004-kube-v1.21.2+vmware.1

Events: <none>

You can see that this machine template has 16GB of RAM and 4 vCPUs. Lets say we want to increase workers to 120GB of RAM and 24 vCPUs, how would we do this?

Step 2.

We need to clone the currently in use machine template into a new one and then apply it.

kubectl get vspheremachinetemplate tkg-workload-01-worker -o yaml > new-machine-template.yaml

Step 3.

Now that we have exported the current machine template into a new yaml file, we can edit it to suit our needs. Edi the file and make the changes to the file.

apiVersion: infrastructure.cluster.x-k8s.io/v1alpha3

kind: VSphereMachineTemplate

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"infrastructure.cluster.x-k8s.io/v1alpha3","kind":"VSphereMachineTemplate","metadata":{"annotations":{},"creationTimestamp":"2021-10-29T14:11:25Z","generation":1,"name":"tkg-workload-01-worker-scale","namespace":"default","ownerReferences":[{"apiVersion":"cluster.x-k8s.io/v1alpha3","kind":"Cluster","name":"tkg-workload-01","uid":"be507594-0c05-4d30-8ed6-56811733df23"}],"resourceVersion":"45814","uid":"fc1f3d9f-078f-4282-b93f-e46593a760a5"},"spec":{"template":{"spec":{"cloneMode":"fullClone","datacenter":"/TanzuPOC","datastore":"tanzu_ssd_02","diskGiB":40,"folder":"/TanzuPOC/vm/tkg-vsphere-workload","memoryMiB":122880,"network":{"devices":[{"dhcp4":true,"networkName":"/TanzuPOC/network/TKG-wkld"}]},"numCPUs":24,"resourcePool":"/TanzuPOC/host/Workload Cluster 1/Resources/tkg-vsphere-workload","server":"tanzuvcenter01.ete.ka.sw.ericsson.se","storagePolicyName":"","template":"/TanzuPOC/vm/ubuntu-2004-kube-v1.21.2+vmware.1"}}}}

creationTimestamp: "2021-11-01T11:18:08Z"

generation: 1

name: tkg-workload-01-worker-scale

namespace: default

ownerReferences:

- apiVersion: cluster.x-k8s.io/v1alpha3

kind: Cluster

name: tkg-workload-01

uid: be507594-0c05-4d30-8ed6-56811733df23

resourceVersion: "1590589"

uid: 8697ec4c-7118-4ff0-b4cd-a456cb090f58

spec:

template:

spec:

cloneMode: fullClone

datacenter: /TanzuPOC

datastore: tanzu_ssd_02

diskGiB: 40

folder: /TanzuPOC/vm/tkg-vsphere-workload

memoryMiB: 122880

network:

devices:

- dhcp4: true

networkName: /TanzuPOC/network/TKG-wkld

numCPUs: 24

resourcePool: /TanzuPOC/host/Workload Cluster 1/Resources/tkg-vsphere-workload

server: vcenter.vmwire.com

storagePolicyName: ""

template: /TanzuPOC/vm/ubuntu-2004-kube-v1.21.2+vmware.1

Change lines 6 and 9 by appending a new name to the machine template, you’ll notice that the original name was tkg-workload-01-worker, I appended “scale” to it so the new name of this new machine template is tkg-workload-01-worker-scale.

Step 4.

We can now apply the new machine template with this command

kubectl apply –f new-machine-template.yaml

We can check that the new machine template exists by running this command

kubectl get vspheremachinetemplate

NAME AGE

tkg-ssc-control-plane 3d1h

tkg-ssc-worker 3d1h

tkg-workload-01-control-plane 3d

tkg-workload-01-worker 3d

tkg-workload-01-worker-scale 10s

Step 5.

Now we can apply the new machine template to our cluster.

Before doing that, we need to obtain the machine deployment details for the tkg-workload-01 cluster, we can get this information by running these commands

kubectl get MachineDeployment

NAME PHASE REPLICAS READY UPDATED UNAVAILABLE

tkg-ssc-md-0 Running 3 3 3

tkg-workload-01-md-0 Running 4 4 4

We are interested in the tkg-workload-01-md-0 machine deployment so lets describe it.

kubectl describe MachineDeployment tkg-workload-01-md-0

Name: tkg-workload-01-md-0

Namespace: default

Labels: cluster.x-k8s.io/cluster-name=tkg-workload-01

Annotations: machinedeployment.clusters.x-k8s.io/revision: 3

API Version: cluster.x-k8s.io/v1alpha3

Kind: MachineDeployment

Metadata:

Creation Timestamp: 2021-10-29T14:11:25Z

Generation: 7

Managed Fields:

API Version: cluster.x-k8s.io/v1alpha3

Fields Type: FieldsV1

fieldsV1:

f:metadata:

f:annotations:

.:

f:kubectl.kubernetes.io/last-applied-configuration:

f:labels:

.:

f:cluster.x-k8s.io/cluster-name:

f:spec:

.:

f:clusterName:

f:selector:

.:

f:matchLabels:

.:

f:cluster.x-k8s.io/cluster-name:

f:template:

.:

f:metadata:

.:

f:labels:

.:

f:cluster.x-k8s.io/cluster-name:

f:node-pool:

f:spec:

.:

f:bootstrap:

.:

f:configRef:

.:

f:apiVersion:

f:kind:

f:name:

f:clusterName:

f:infrastructureRef:

.:

f:apiVersion:

f:kind:

f:version:

Manager: kubectl-client-side-apply

Operation: Update

Time: 2021-10-29T14:11:25Z

API Version: cluster.x-k8s.io/v1alpha3

Fields Type: FieldsV1

fieldsV1:

f:spec:

f:template:

f:spec:

f:infrastructureRef:

f:name:

Manager: kubectl-edit

Operation: Update

Time: 2021-11-01T11:25:51Z

API Version: cluster.x-k8s.io/v1alpha3

Fields Type: FieldsV1

fieldsV1:

f:spec:

f:replicas:

Manager: tanzu-plugin-cluster

Operation: Update

Time: 2021-11-01T12:33:35Z

API Version: cluster.x-k8s.io/v1alpha3

Fields Type: FieldsV1

fieldsV1:

f:metadata:

f:annotations:

f:machinedeployment.clusters.x-k8s.io/revision:

f:ownerReferences:

.:

k:{"uid":"be507594-0c05-4d30-8ed6-56811733df23"}:

.:

f:apiVersion:

f:kind:

f:name:

f:uid:

f:status:

.:

f:availableReplicas:

f:observedGeneration:

f:phase:

f:readyReplicas:

f:replicas:

f:selector:

f:updatedReplicas:

Manager: manager

Operation: Update

Time: 2021-11-01T14:30:38Z

Owner References:

API Version: cluster.x-k8s.io/v1alpha3

Kind: Cluster

Name: tkg-workload-01

UID: be507594-0c05-4d30-8ed6-56811733df23

Resource Version: 1665423

UID: 5148e564-cf66-4581-8941-c3024c58967e

Spec:

Cluster Name: tkg-workload-01

Min Ready Seconds: 0

Progress Deadline Seconds: 600

Replicas: 4

Revision History Limit: 1

Selector:

Match Labels:

cluster.x-k8s.io/cluster-name: tkg-workload-01

Strategy:

Rolling Update:

Max Surge: 1

Max Unavailable: 0

Type: RollingUpdate

Template:

Metadata:

Labels:

cluster.x-k8s.io/cluster-name: tkg-workload-01

Node - Pool: tkg-workload-01-worker-pool

Spec:

Bootstrap:

Config Ref:

API Version: bootstrap.cluster.x-k8s.io/v1alpha3

Kind: KubeadmConfigTemplate

Name: tkg-workload-01-md-0

Cluster Name: tkg-workload-01

Infrastructure Ref:

API Version: infrastructure.cluster.x-k8s.io/v1alpha3

Kind: VSphereMachineTemplate

Name: tkg-workload-01-worker

Version: v1.21.2+vmware.1

Status:

Available Replicas: 4

Observed Generation: 7

Phase: Running

Ready Replicas: 4

Replicas: 4

Selector: cluster.x-k8s.io/cluster-name=tkg-workload-01

Updated Replicas: 4

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal SuccessfulScale 90s (x2 over 114m) machinedeployment-controller Scaled down MachineSet "tkg-workload-01-md-0-647645ddcd" to 4

The line that we are interested in is line 38. This is the current machine template that this cluster is using, you’ll notice that it is of course using the original spec, what we need to do is change it to the new spec that we created earlier. If you remember, we named that one tkg-workload-01-worker-scale.

Step 6.

kubectl edit MachineDeployment tkg-workload-01-md-0

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file will abort the edit. If an error occurs while saving this file will be

# reopened with the relevant failures.

#

apiVersion: cluster.x-k8s.io/v1alpha3

kind: MachineDeployment

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"cluster.x-k8s.io/v1alpha3","kind":"MachineDeployment","metadata":{"annotations":{},"labels":{"cluster.x-k8s.io/cluster-name":"tkg-workload-01"},"name":"tkg-workload-01-md-0","namespace":"default"},"spec":{"clusterName":"tkg-workload-01","replicas":4,"selector":{"matchLabels":{"cluster.x-k8s.io/cluster-name":"tkg-workload-01"}},"template":{"metadata":{"labels":{"cluster.x-k8s.io/cluster-name":"tkg-workload-01","node-pool":"tkg-workload-01-worker-pool"}},"spec":{"bootstrap":{"configRef":{"apiVersion":"bootstrap.cluster.x-k8s.io/v1alpha3","kind":"KubeadmConfigTemplate","name":"tkg-workload-01-md-0"}},"clusterName":"tkg-workload-01","infrastructureRef":{"apiVersion":"infrastructure.cluster.x-k8s.io/v1alpha3","kind":"VSphereMachineTemplate","name":"tkg-workload-01-worker"},"version":"v1.21.2+vmware.1"}}}}

machinedeployment.clusters.x-k8s.io/revision: "3"

creationTimestamp: "2021-10-29T14:11:25Z"

generation: 7

labels:

cluster.x-k8s.io/cluster-name: tkg-workload-01

name: tkg-workload-01-md-0

namespace: default

ownerReferences:

- apiVersion: cluster.x-k8s.io/v1alpha3

kind: Cluster

name: tkg-workload-01

uid: be507594-0c05-4d30-8ed6-56811733df23

resourceVersion: "1665423"

uid: 5148e564-cf66-4581-8941-c3024c58967e

spec:

clusterName: tkg-workload-01

minReadySeconds: 0

progressDeadlineSeconds: 600

replicas: 4

revisionHistoryLimit: 1

selector:

matchLabels:

cluster.x-k8s.io/cluster-name: tkg-workload-01

strategy:

rollingUpdate:

maxSurge: 1

maxUnavailable: 0

type: RollingUpdate

template:

metadata:

labels:

cluster.x-k8s.io/cluster-name: tkg-workload-01

node-pool: tkg-workload-01-worker-pool

spec:

bootstrap:

configRef:

apiVersion: bootstrap.cluster.x-k8s.io/v1alpha3

kind: KubeadmConfigTemplate

name: tkg-workload-01-md-0

clusterName: tkg-workload-01

infrastructureRef:

apiVersion: infrastructure.cluster.x-k8s.io/v1alpha3

kind: VSphereMachineTemplate

name: tkg-workload-01-worker-scale

version: v1.21.2+vmware.1

status:

availableReplicas: 4

observedGeneration: 7

phase: Running

readyReplicas: 4

replicas: 4

selector: cluster.x-k8s.io/cluster-name=tkg-workload-01

updatedReplicas: 4

The line that we are interested in is line 54. We need to change the machine template from that old one to our new one.

Lets make that change by going down to line 54 and adding “-scale” to the end of that line. Once you save and quit using “:wq!”. Kubernetes will make do a rolling update of your TKGm cluster for you.

Finishing off

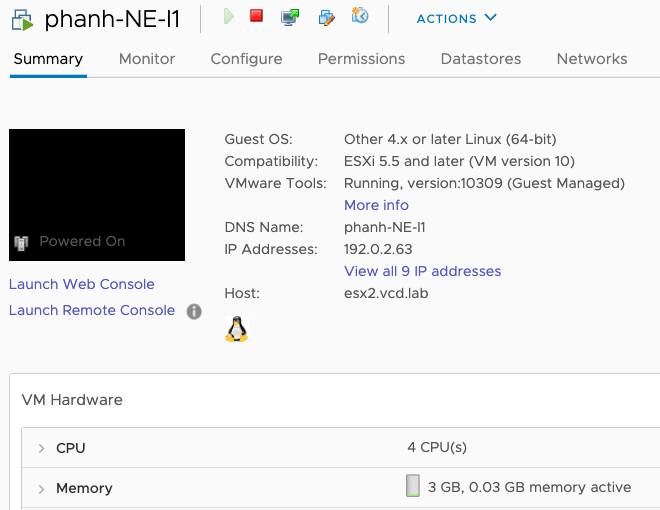

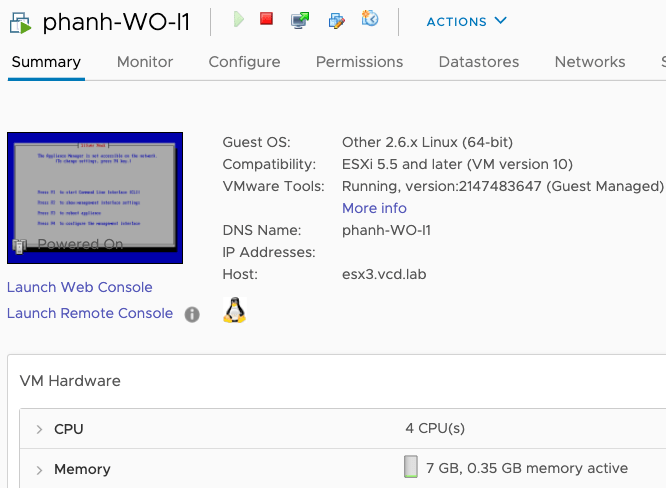

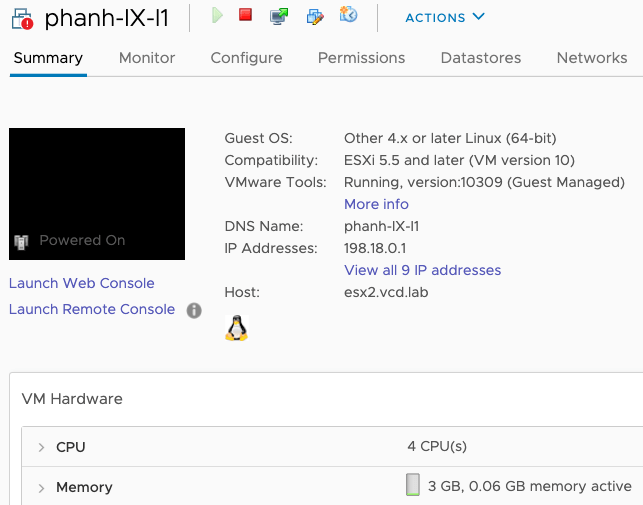

Once the rolling update is done, you can check vSphere Web Client for new VMs being cloned and old ones being deleted. You can also run the command below to see the status of the rolling updates.

kubectl get MachineDeployment

You’ll then see that your new worker nodes have been resized without interrupting any of the running pods in the cluster.