Setting up the VCD CSI driver on your Kubernetes cluster

Container Service Extension (CSE) 3.1.1 now supports persistent volumes that are backed by VCD’s Named Disk feature. These now appear under Storage – Named disks in VCD. To use this functionality today (28 September 2021), you’ll need to deploy CSE 3.1.1 beta with VCD 10.3. See this previous post for details.

Ideally, you want to deploy the CSI driver using the same user that also deployed the Kubernetes cluster into VCD. In my environment, I used a user named tenant1-admin, this user has the Organization Administrator role with the added right:

Compute – Organization VDC – Create a Shared Disk.

Create the vcloud-basic-auth.yaml

Before you can create persistent volumes you have to setup the Kubernetes cluster with the VCD CSI driver.

Ensure you can log into the cluster by downloading the kube config and logging into it using the correct context.

kubectl config get-contexts

CURRENT NAME CLUSTER AUTHINFO NAMESPACE

* kubernetes-admin@kubernetes kubernetes kubernetes-admin

Create the vcloud-basic-auth.yaml file which is used to setup the VCD CSI driver for this Kubernetes cluster.

VCDUSER=$(echo -n 'tenant1-admin' | base64)

PASSWORD=$(echo -n 'Vmware1!' | base64)

cat > vcloud-basic-auth.yaml << END

---

apiVersion: v1

kind: Secret

metadata:

name: vcloud-basic-auth

namespace: kube-system

data:

username: "$VCDUSER"

password: "$PASSWORD"

END

Install the CSI driver into the Kubernetes cluster.

kubectl apply -f vcloud-basic-auth.yaml

You should see three new pods starting in the kube-system namespace.

kube-system csi-vcd-controllerplugin-0 3/3 Running 0 43m 100.96.1.10 node-xgsw <none> <none>

kube-system csi-vcd-nodeplugin-bckqx 2/2 Running 0 43m 192.168.0.101 node-xgsw <none> <none>

kube-system vmware-cloud-director-ccm-99fd59464-swh29 1/1 Running 0 43m 192.168.0.100 mstr-31jt <none> <none>

Setup a Storage Class

Here’s my storage-class.yaml file, which is used to setup the storage class for my Kubernetes cluster.

apiVersion: v1

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

annotations:

storageclass.kubernetes.io/is-default-class: "false"

name: vcd-disk-dev

provisioner: named-disk.csi.cloud-director.vmware.com

reclaimPolicy: Delete

parameters:

storageProfile: "truenas-iscsi-luns"

filesystem: "ext4"

Notice that the storageProfile needs to be set to either “*” for any storage policy or the name of a storage policy that you has access to in your Organization VDC.

Create the storage class by applying that file.

kubectl apply -f storage-class.yaml

You can see if that was successful by getting all storage classes.

kubectl get storageclass

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

vcd-disk-dev named-disk.csi.cloud-director.vmware.com Delete Immediate false 43h

Make the storage class the default

kubectl patch storageclass vcd-disk-dev -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

Using the VCD CSI driver

Now that we’ve got a storage class and the driver installed, we can now deploy a persistent volume claim and attach it to a pod. Lets create a persistent volume claim first.

Creating a persistent volume claim

We will need to prepare another file, I’ve called my my-pvc.yaml, and it looks like this.

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: my-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

storageClassName: "vcd-disk-dev"

Lets deploy it

kubectl apply -f my-pvc.yaml

We can check that it deployed with this command

kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

my-pvc Bound pvc-2ddeccd0-e092-4aca-a090-dff9694e2f04 1Gi RWO vcd-disk-dev 36m

Attaching the persistent volume to a pod

Lets deploy an nginx pod that will attach the PV and use it for nginx.

My pod.yaml looks like this.

apiVersion: v1

kind: Pod

metadata:

name: pod

labels:

app : nginx

spec:

volumes:

- name: my-pod-storage

persistentVolumeClaim:

claimName: my-pvc

containers:

- name: my-pod-container

image: nginx

ports:

- containerPort: 80

name: "http-server"

volumeMounts:

- mountPath: "/usr/share/nginx/html"

name: my-pod-storage

You can see that the persistentVolumeClaim, claimName: my-pvc, this aligns to the name of the PVC. I’ve also mounted it to /usr/share/nginx/html within the nginx pod.

Lets attach the PV.

kubectl apply -f pod.yaml

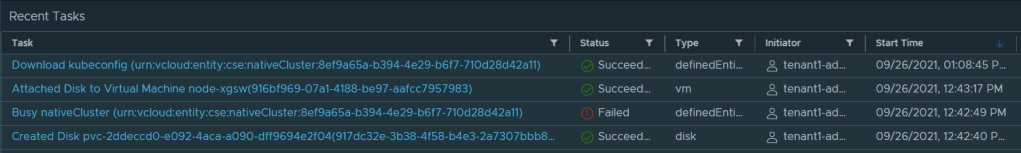

You’ll see a few things happen in the Recent Tasks pane when you run this. You can see that Kubernetes has attached the PV to the nginx pod using the CSI driver, the driver informs VCD to attach the disk to the worker node.

If you open up vSphere Web Client, you can see that the disk is now attached to the worker node.

You can also see the CSI driver doing its thing if you take a look at the logs with this command.

kubectl logs csi-vcd-controllerplugin-0 -n kube-system -c csi-attacher

Checking the mount in the pod

You can log into the nginx pod using this command.

kubectl exec -it pod -- bash

Then type mount and df to see the mount is present and the size of the mount point.

df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/sdb 999320 1288 929220 1% /usr/share/nginx/html

mount

/dev/sdb on /usr/share/nginx/html type ext4 (rw,relatime)

The size is correct, being 1GB and the disk is mounted.

Describing the pod gives us more information.

kubectl describe po pod

Name: pod

Namespace: default

Priority: 0

Node: node-xgsw/192.168.0.101

Start Time: Sun, 26 Sep 2021 12:43:15 +0300

Labels: app=nginx

Annotations: <none>

Status: Running

IP: 100.96.1.12

IPs:

IP: 100.96.1.12

Containers:

my-pod-container:

Container ID: containerd://6a194ac30dab7dc5a5127180af139e531e650bedbb140e4dc378c21869bd570f

Image: nginx

Image ID: docker.io/library/nginx@sha256:853b221d3341add7aaadf5f81dd088ea943ab9c918766e295321294b035f3f3e

Port: 80/TCP

Host Port: 0/TCP

State: Running

Started: Sun, 26 Sep 2021 12:43:34 +0300

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/usr/share/nginx/html from my-pod-storage (rw)

/var/run/secrets/kubernetes.io/serviceaccount from default-token-xm4gd (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

my-pod-storage:

Type: PersistentVolumeClaim (a reference to a PersistentVolumeClaim in the same namespace)

ClaimName: my-pvc

ReadOnly: false

default-token-xm4gd:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-xm4gd

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events: <none>

Useful commands

Show storage classes

kubectl get storageclass

Show persistent volumes and persistent volume claims

kubectl get pv,pvc

Show all pods running in the cluster

kubectl get po -A -o wide

Describe the nginx pod

kubectl describe po pod

Show logs for the CSI driver

kubectl logs csi-vcd-controllerplugin-0 -n kube-system -c csi-attacher

kubectl logs csi-vcd-controllerplugin-0 -n kube-system -c csi-provisioner

kubectl logs csi-vcd-controllerplugin-0 -n kube-system -c vcd-csi-plugin

kubectl logs vmware-cloud-director-ccm-99fd59464-swh29 -n kube-system

Useful links

https://github.com/vmware/cloud-director-named-disk-csi-driver/blob/0.1.0-beta/README.md

You never actually explained how to install/configure the CSI driver, and the official README doesn’t either. I want to use this on a Rancher cluster which happens to be on the VCD platform. Would you mind elaborating please?