In the previous post I prepared NSX ALB for Tanzu Kubernetes Grid ingress services. In this post I will deploy a new TKG cluster and use if for Tanzu Shared Services.

Tanzu Kubernetes Grid includes binaries for tools that provide in-cluster and shared services to the clusters running in your Tanzu Kubernetes Grid instance. All of the provided binaries and container images are built and signed by VMware.

A shared services cluster, is just a Tanzu Kubernetes Grid workload cluster used for shared services, it can be provisioned using the standard cli command tanzu cluster create, or through Tanzu Mission Control.

You can add functionalities to Tanzu Kubernetes clusters by installing extensions to different cluster locations as follows:

| Function | Extension | Location | Procedure |

| Ingress Control | Contour | Tanzu Kubernetes or Shared Service cluster | Implementing Ingress Control with Contour |

| Service Discovery | External DNS | Tanzu Kubernetes or Shared Service cluster | Implementing Service Discovery with External DNS |

| Log Forwarding | Fluent Bit | Tanzu Kubernetes cluster | Implementing Log Forwarding with Fluentbit |

| Container Registry | Harbor | Shared Services cluster | Deploy Harbor Registry as a Shared Service |

| Monitoring | Prometheus Grafana | Tanzu Kubernetes cluster | Implementing Monitoring with Prometheus and Grafana |

The Harbor service runs on a shared services cluster, to serve all the other clusters in an installation. The Harbor service requires the Contour service to also run on the shared services cluster. In many environments, the Harbor service also benefits from External DNS running on its cluster, as described in Harbor Registry and External DNS.

Some extensions require or are enhanced by other extensions deployed to the same cluster:

- Contour is required by Harbor, External DNS, and Grafana

- Prometheus is required by Grafana

- External DNS is recommended for Harbor on infrastructures with load balancing (AWS, Azure, and vSphere with NSX Advanced Load Balancer), especially in production or other environments in which Harbor availability is important.

Each Tanzu Kubernetes Grid instance can only have one shared services cluster.

Relationships

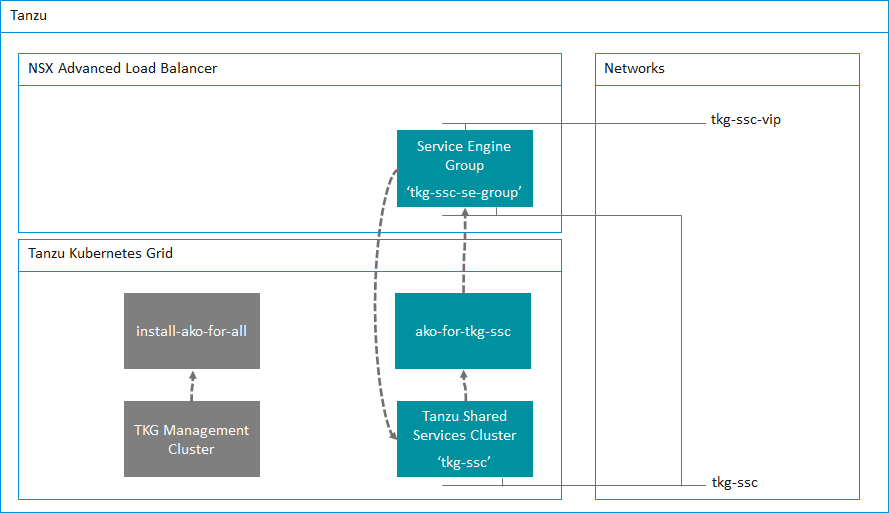

The following table shows the relationships between the NSX ALB system, the TKG cluster deployment config and the AKO config. It is important to get these three correct.

| Avi Controller | TKG cluster deployment file | AKO Config file |

Service Engine Group nametkg-ssc-se-group | AVI_LABELS | clusterSelector: |

TKG Cluster Deployment Config File – tkg-ssc.yaml

Lets first take a look at the deployment configuration file for the Shared Services Cluster.

I’ve highlighted in bold the two key value pairs that are important in this file. You’ll notice that

AVI_LABELS: |

'cluster': 'tkg-ssc'We are labeling this TKG cluster so that Avi knows about it. In addition the other key value pair

AVI_SERVICE_ENGINE_GROUP: tkg-ssc-se-groupThis ensures that this TKG cluster will use the service engine group named tkg-ssc-se-group.

While we have this file open you’ll notice that the long certificate under AVI_CA_DATA_B64 is the copy and paste of the Avi Controller certificate that I copied from the previous post.

Take some time to review my cluster deployment config file for the Shared Services Cluster below. You’ll see that you will need to specify the VIP network for NSX ALB to use

AVI_DATA_NETWORK: tkg-ssc-vip

AVI_DATA_NETWORK_CIDR: 172.16.4.32/27

Basically, any key that begins with AVI_ needs to have the corresponding setting configured in NSX ALB. This is what we prepared in the previous post.

root@photon-manager [ ~/.tanzu/tkg/clusterconfigs ]# cat tkg-ssc.yaml

AVI_CA_DATA_B64: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUZIakNDQkFhZ0F3SUJBZ0lTQXo2SUpRV3hvMDZBUUdZaXkwUGpyN0pqTUEwR0NTcUdTSWIzRFFFQkN3VUEKTURJeEN6QUpCZ05WQkFZVEFsVlRNUll3RkFZRFZRUUtFdzFNWlhRbmN5QkZibU55ZVhCME1Rc3dDUVlEVlFRRApFd0pTTXpBZUZ3MHlNVEEzTURnd056TXlORGRhRncweU1URXdNRFl3TnpNeU5EWmFNQmN4RlRBVEJnTlZCQU1NCkRDb3VkbTEzYVhKbExtTnZiVENDQVNJd0RRWUpLb1pJaHZjTkFRRUJCUUFEZ2dFUEFEQ0NBUW9DZ2dFQkFLVVkKbE9iZjk4QlF1cnhyaFNzWmFDRVFrMWxHWUJMd1REMTRITVlWZlNaOWE3WWRuNVFWZ2dKdUd6Um1tdVdFaWRTRgppaW1teTdsWHMyVTZjazdjOXhQZkxWOHpySEZjL2tEbDB4ZzZRWVNJa0wrRWdkWW9VeG1vTk95VzArc0FrTEFQCkZaU1JYUW44K3BiYlJDVFZ2L3ErTjBueUJzNlZyTVJySjA3UEhwck9qTE01WHo2eEJxR2E5WXEzbUhNZ0lXaXoKYXVVam84ZnBrYllxMlpKSlkzVXFxNkhBTEw3MDVTblJxZXk5c05pOEJkT2RtTk5ka1ovZXBacUlCRDZQZk1WegpEWVVEV2h0OUczR2NXcGxUT1AweDJTYmZ0MEFCUWYvU1BEWHlBSFBYYWhoNThKc1kwNHd5ZE04QWNQRkRwbUc5CjRvTnpoaTdTVXJLdDJ4cStXazhDQXdFQUFhT0NBa2N3Z2dKRE1BNEdBMVVkRHdFQi93UUVBd0lGb0RBZEJnTlYKSFNVRUZqQVVCZ2dyQmdFRkJRY0RBUVlJS3dZQkJRVUhBd0l3REFZRFZSMFRBUUgvQkFJd0FEQWRCZ05WSFE0RQpGZ1FVbk1QeDFQWUlOL1kvck5WL2FNYU9MNVBzUGtRd0h3WURWUjBqQkJnd0ZvQVVGQzZ6RjdkWVZzdXVVQWxBCjVoK3ZuWXNVd3NZd1ZRWUlLd1lCQlFVSEFRRUVTVEJITUNFR0NDc0dBUVVGQnpBQmhoVm9kSFJ3T2k4dmNqTXUKYnk1c1pXNWpjaTV2Y21jd0lnWUlLd1lCQlFVSE1BS0dGbWgwZEhBNkx5OXlNeTVwTG14bGJtTnlMbTl5Wnk4dwpGd1lEVlIwUkJCQXdEb0lNS2k1MmJYZHBjbVV1WTI5dE1Fd0dBMVVkSUFSRk1FTXdDQVlHWjRFTUFRSUJNRGNHCkN5c0dBUVFCZ3Q4VEFRRUJNQ2d3SmdZSUt3WUJCUVVIQWdFV0dtaDBkSEE2THk5amNITXViR1YwYzJWdVkzSjUKY0hRdWIzSm5NSUlCQkFZS0t3WUJCQUhXZVFJRUFnU0I5UVNCOGdEd0FIWUFSSlJsTHJEdXpxL0VRQWZZcVA0bwp3TnJtZ3I3WXl6RzFQOU16bHJXMmdhZ0FBQUY2aFQ5NlpRQUFCQU1BUnpCRkFpQlRkTUErcUdlQmM4cnpvREtMClFQaGgya05aVitVMmFNVEJacGYyaFpFRnpBSWhBT2Y2bVZFQkMwVlY3VWlUNC9tSWZWUEM2NUphL0RRc0xEOFkKMHFjQzdzNGhBSFlBZlQ3eStJLy9pRlZvSk1MQXlwNVNpWGtyeFE1NENYOHVhcGRvbVg0aThOY0FBQUY2aFQ5Ngpqd0FBQkFNQVJ6QkZBaUVBeEx1SDJTS2ZlVThpRUd6NWd4dmc2ME8zSmxmY25mN2JKS3h2K1dzUENPUUNJRjArCm1aV0psQSt2Uy85cjhPbmZ5cElMTFhaamIydzBEQlREWTN6WlErOUxNQTBHQ1NxR1NJYjNEUUVCQ3dVQUE0SUIKQVFDczNYeUhJbUF2T25JdjVMdm1nZ2l5M0s0Q1J1Zkg5ZmZWc1RtSDkwSzZETmorWkNySDEvNlJBUUREek45SApYZDJJd1BCWkJHcURocmYvZ0xOWTg2NHlkT2pPRHFuSXdsNVFDb095c0h4NGtISnFXdmpwVVF3d3FiS3lZeDZCCmkxalRtU0RTZmV0OHVtTUtMZ1Z3djBHdnZaV0daYmNUaVJCMEIycW5MR0VPbDJ2WnoyQlhkK1RGMmgyUGRkMzkKTHFDYzJ6UFZOVnB4WVR3OVJ4U1Q1d2FiR2doK0pOVGl1aVVlOUFhQmxOcmRjYjJ0d3d5TnA4Y3krdEw2RXMyagpRbVB2WndMYVRGQngzNWJHeE9LNGF1NFM5SVBIUG84cVVQVVR6RVAwYWtyK2FqY3hOMXlPalRSRENzWEIrcitrCmQ5ZW9CVlFjbFJTa0dMbE5Nc044OEhlKwotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0t

AVI_CLOUD_NAME: vcenter.vmwire.com

AVI_CONTROLLER: avi.vmwire.com

AVI_DATA_NETWORK: tkg-ssc-vip

AVI_DATA_NETWORK_CIDR: 172.16.4.32/27

AVI_ENABLE: "true"

AVI_LABELS: |

'cluster': 'tkg-ssc'

AVI_PASSWORD: <encoded:Vm13YXJlMSE=>

AVI_SERVICE_ENGINE_GROUP: tkg-ssc-se-group

AVI_USERNAME: admin

CLUSTER_CIDR: 100.96.0.0/11

CLUSTER_NAME: tkg-ssc

CLUSTER_PLAN: dev

ENABLE_CEIP_PARTICIPATION: "false"

ENABLE_MHC: "true"

IDENTITY_MANAGEMENT_TYPE: none

INFRASTRUCTURE_PROVIDER: vsphere

LDAP_BIND_DN: ""

LDAP_BIND_PASSWORD: ""

LDAP_GROUP_SEARCH_BASE_DN: ""

LDAP_GROUP_SEARCH_FILTER: ""

LDAP_GROUP_SEARCH_GROUP_ATTRIBUTE: ""

LDAP_GROUP_SEARCH_NAME_ATTRIBUTE: cn

LDAP_GROUP_SEARCH_USER_ATTRIBUTE: DN

LDAP_HOST: ""

LDAP_ROOT_CA_DATA_B64: ""

LDAP_USER_SEARCH_BASE_DN: ""

LDAP_USER_SEARCH_FILTER: ""

LDAP_USER_SEARCH_NAME_ATTRIBUTE: ""

LDAP_USER_SEARCH_USERNAME: userPrincipalName

OIDC_IDENTITY_PROVIDER_CLIENT_ID: ""

OIDC_IDENTITY_PROVIDER_CLIENT_SECRET: ""

OIDC_IDENTITY_PROVIDER_GROUPS_CLAIM: ""

OIDC_IDENTITY_PROVIDER_ISSUER_URL: ""

OIDC_IDENTITY_PROVIDER_NAME: ""

OIDC_IDENTITY_PROVIDER_SCOPES: ""

OIDC_IDENTITY_PROVIDER_USERNAME_CLAIM: ""

SERVICE_CIDR: 100.64.0.0/13

TKG_HTTP_PROXY_ENABLED: "false"

VSPHERE_CONTROL_PLANE_DISK_GIB: "20"

VSPHERE_CONTROL_PLANE_ENDPOINT: 172.16.3.58

VSPHERE_CONTROL_PLANE_MEM_MIB: "4096"

VSPHERE_CONTROL_PLANE_NUM_CPUS: "2"

VSPHERE_DATACENTER: /home.local

VSPHERE_DATASTORE: /home.local/datastore/vsanDatastore

VSPHERE_FOLDER: /home.local/vm/tkg-ssc

VSPHERE_NETWORK: tkg-ssc

VSPHERE_PASSWORD: <encoded:Vm13YXJlMSE=>

VSPHERE_RESOURCE_POOL: /home.local/host/cluster/Resources/tkg-ssc

VSPHERE_SERVER: vcenter.vmwire.com

VSPHERE_SSH_AUTHORIZED_KEY: ssh-rsa AAAAB3NzaC1yc2EAAAABJQAAAQEAhcw67bz3xRjyhPLysMhUHJPhmatJkmPUdMUEZre+MeiDhC602jkRUNVu43Nk8iD/I07kLxdAdVPZNoZuWE7WBjmn13xf0Ki2hSH/47z3ObXrd8Vleq0CXa+qRnCeYM3FiKb4D5IfL4XkHW83qwp8PuX8FHJrXY8RacVaOWXrESCnl3cSC0tA3eVxWoJ1kwHxhSTfJ9xBtKyCqkoulqyqFYU2A1oMazaK9TYWKmtcYRn27CC1Jrwawt2zfbNsQbHx1jlDoIO6FLz8Dfkm0DToanw0GoHs2Q+uXJ8ve/oBs0VJZFYPquBmcyfny4WIh4L0lwzsiAVWJ6PvzF5HMuNcwQ== rsa-key-20210508

VSPHERE_TLS_THUMBPRINT: D0:38:E7:8A:94:0A:83:69:F7:95:80:CD:99:9B:D3:3E:E4:DA:62:FA

VSPHERE_USERNAME: administrator@vsphere.local

VSPHERE_WORKER_DISK_GIB: "20"

VSPHERE_WORKER_MEM_MIB: "4096"

VSPHERE_WORKER_NUM_CPUS: "2"AKODeploymentConfig – tkg-ssc-akodeploymentconfig.yaml

The next file we need to configure is the AKODeploymentConfig file, this file is used by Kubernetes to ensure that the L4 load balancing is using NSX ALB.

I’ve highlighted some settings that are important.

clusterSelector:

matchLabels:

cluster: tkg-ssc

Here we are specifying a cluster selector for AKO that will use the name of the cluster, this corresponds to the following setting in the tkg-ssc.yaml file.

AVI_LABELS: |

'cluster': 'tkg-ssc'

The next key value pair specifies what Service Engines to use for this TKG cluster. This is of course what we configured within Avi in the previous post.

serviceEngineGroup: tkg-ssc-se-group

root@photon-manager [ ~/.tanzu/tkg/clusterconfigs ]# cat tkg-ssc-akodeploymentconfig.yaml

apiVersion: networking.tkg.tanzu.vmware.com/v1alpha1

kind: AKODeploymentConfig

metadata:

finalizers:

- ako-operator.networking.tkg.tanzu.vmware.com

generation: 2

name: ako-for-tkg-ssc

spec:

adminCredentialRef:

name: avi-controller-credentials

namespace: tkg-system-networking

certificateAuthorityRef:

name: avi-controller-ca

namespace: tkg-system-networking

cloudName: vcenter.vmwire.com

clusterSelector:

matchLabels:

cluster: tkg-ssc

controller: avi.vmwire.com

dataNetwork:

cidr: 172.16.4.32/27

name: tkg-ssc-vip

extraConfigs:

image:

pullPolicy: IfNotPresent

repository: projects-stg.registry.vmware.com/tkg/ako

version: v1.3.2_vmware.1

ingress:

defaultIngressController: false

disableIngressClass: true

serviceEngineGroup: tkg-ssc-se-groupSetup the new AKO configuration before deploying the new TKG cluster

Before deploying the new TKG cluster, we have to setup a new AKO configuration. To do this run the following command under the TKG Management Cluster context.

kubectl apply -f <Path_to_YAML_File>

Which in my example is

kubectl apply -f tkg-ssc-akodeploymentconfig.yaml

You can use the following to check that that was successful.

kubectl get akodeploymentconfig

root@photon-manager [ ~/.tanzu/tkg/clusterconfigs ]# kubectl get akodeploymentconfig

NAME AGE

ako-for-tkg-ssc 3d19h

You can also show additional details by using the kubectl describe command

kubectl describe akodeploymentconfig ako-for-tkg-ssc

For any new AKO configs that you need, just take a copy of the .yaml file and edit the contents that correspond to the new AKO config. For example, to create another AKO config for a new tenant, take a copy of the tkg-ssc-akodeploymentconfig.yaml file and give it a new name such as tkg-tenant-1-akodeploymentconfig.yaml, and change the following highlighted key value pairs.

apiVersion: networking.tkg.tanzu.vmware.com/v1alpha1

kind: AKODeploymentConfig

metadata:

finalizers:

- ako-operator.networking.tkg.tanzu.vmware.com

generation: 2

name: ako-for-tenant-1

spec:

adminCredentialRef:

name: avi-controller-credentials

namespace: tkg-system-networking

certificateAuthorityRef:

name: avi-controller-ca

namespace: tkg-system-networking

cloudName: vcenter.vmwire.com

clusterSelector:

matchLabels:

cluster: tkg-tenant-1

controller: avi.vmwire.com

dataNetwork:

cidr: 172.16.4.64/27

name: tkg-tenant-1-vip

extraConfigs:

image:

pullPolicy: IfNotPresent

repository: projects-stg.registry.vmware.com/tkg/ako

version: v1.3.2_vmware.1

ingress:

defaultIngressController: false

disableIngressClass: true

serviceEngineGroup: tkg-tenant-1-se-groupCreate the Tanzu Shared Services Cluster

Now we can deploy the Shared Services Cluster with the file named tkg-ssc.yaml

tanzu cluster create --file /root/.tanzu/tkg/clusterconfigs/tkg-ssc.yaml

This will deploy the cluster according to that cluster spec.

Obtain credentials for shared services cluster tkg-ssc

tanzu cluster kubeconfig get tkg-ssc --admin

Add labels to the cluster tkg-ssc

Add the cluster role as a Tanzu Shared Services Cluster

kubectl label cluster.cluster.x-k8s.io/tkg-ssc cluster-role.tkg.tanzu.vmware.com/tanzu-services="" --overwrite=true

Check that label was applied

tanzu cluster list --include-management-cluster

root@photon-manager [ ~/.tanzu/tkg/clusterconfigs ]# tanzu cluster list --include-management-clusterNAME NAMESPACE STATUS CONTROLPLANE WORKERS KUBERNETES ROLES PLAN

tkg-ssc default running 1/1 1/1 v1.20.5+vmware.2 tanzu-services dev

tkg-mgmt tkg-system running 1/1 1/1 v1.20.5+vmware.2 management dev

Add the key value pair of cluster=tkg-ssc to label this cluster and complete the setup of AKO.

kubectl label cluster tkg-ssc cluster=tkg-ssc

Once the cluster is labelled, switch to the tkg-ssc context and you will notice a new namespace named “avi-system” being created and a new pod named “ako-0” being started.

kubectl config use-context tkg-ssc-admin@tkg-ssc

kubectl get ns

kubectl get pods -n avi-system

root@photon-manager [ ~/.tanzu/tkg/clusterconfigs ]# kubectl config use-context tkg-ssc-admin@tkg-ssc

Switched to context "tkg-ssc-admin@tkg-ssc".

root@photon-manager [ ~/.tanzu/tkg/clusterconfigs ]# kubectl get ns

NAME STATUS AGE

avi-system Active 3d18h

cert-manager Active 3d15h

default Active 3d19h

kube-node-lease Active 3d19h

kube-public Active 3d19h

kube-system Active 3d19h

kubernetes-dashboard Active 3d16h

tanzu-system-monitoring Active 3d15h

tkg-system Active 3d19h

tkg-system-public Active 3d19h

root@photon-manager [ ~/.tanzu/tkg/clusterconfigs ]# kubectl get pods -n avi-system

NAME READY STATUS RESTARTS AGE

ako-0 1/1 Running 0 3d18h

Summary

We now have a new TKG Shared Services Cluster up and running and configured for Kubernetes ingress services with NSX ALB.

In the next post I’ll deploy the Kubernetes Dashboard onto the Shared Services Cluster and show how this then configures the NSX ALB for ingress services.

2 thoughts on “Deploying a Tanzu Shared Services Cluster with NSX Advanced Load Balancer Ingress Services Integration”